Data-driven ad creatives: boost ROI with smarter testing

TL;DR:

- Data-driven creative decisions improve performance and reduce guesswork in advertising.

- Testing methods like A/B, multivariate, and AI analysis identify winning elements effectively.

- Balancing data insights with creative risk prevents ads from becoming predictable and boring.

Most performance marketers believe their best ad creatives come from sharp instincts, strong brand sense, or a designer who just gets it. The reality is harder to swallow: instinct alone leaves serious money on the table. The marketers consistently hitting their ROAS targets are the ones treating creative decisions the same way they treat bid strategy, which is with data, structure, and repeatable testing. This article walks you through why data is now the foundation of effective ad creative work, which data sources actually matter, how to run tests that produce real answers, and how to turn those answers into better-performing ads.

Table of Contents

- Why data matters in building effective ad creatives

- The essential data sources for optimizing creatives

- Testing methods: A/B, multivariate, and AI-driven analysis

- Applying insights: From data to creative improvement

- Why over-focusing on data can backfire—and how to balance creativity and numbers

- Next steps: Power up your ad creative workflow with POPJAM.IO

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Data fuels creative wins | Top-performing ad campaigns rely on data-driven insights, not guesswork, to refine visuals, copy, and calls to action. |

| Right metrics matter most | Focusing on actionable data sources helps creative teams target real improvements and avoid distractions. |

| Test, learn, repeat | Regular A/B, multivariate, and AI-driven tests enable a continuous cycle of creative growth and higher ROI. |

| Balance art with analytics | The best results come from blending creative talent with the discipline of data-guided experimentation. |

Why data matters in building effective ad creatives

For most of advertising history, creative decisions lived in the gut. A creative director liked a headline. A founder preferred a certain color. A team voted on which image felt right. The problem with gut-driven creative is that it confuses preference with performance. What your team finds compelling and what your audience responds to are often two completely different things.

Data changes that equation. When you ground creative decisions in evidence, you stop guessing and start learning. Ad feedback analysis becomes a feedback loop rather than a post-mortem. You can see, in measurable terms, which hooks grabbed attention, which visuals drove clicks, and which CTAs converted.

Here are the core data types e-commerce teams can actually use:

- Click-through rate (CTR): Measures how compelling your creative is at the impression level. Low CTR usually points to a weak hook or irrelevant visual.

- Conversion rate per creative variant: Tells you which ad actually drives purchases, not just clicks.

- Audience demographic breakdowns: Reveals whether your creative is resonating with your intended segment or pulling in the wrong crowd.

- Creative element performance: Isolates which specific component (headline, image, CTA button color) is doing the heavy lifting.

- Engagement rate: Tracks saves, shares, and comments, which signal emotional resonance beyond the click.

What most marketers get wrong is running a single creative, watching it underperform, and concluding the audience is wrong. The audience is rarely wrong. The creative is untested. AI ad creative ROI research consistently shows that teams running structured creative tests outperform those relying on single-variant launches.

“Data drives ad creative optimization through granular analysis, A/B and multivariate testing, and AI-powered tagging to identify winning elements like hooks, visuals, and CTAs.”

The shift from subjective to objective creative decision-making is not about removing human judgment. It is about giving that judgment better inputs. Data tells you what is working. Your creative instincts tell you why and what to try next.

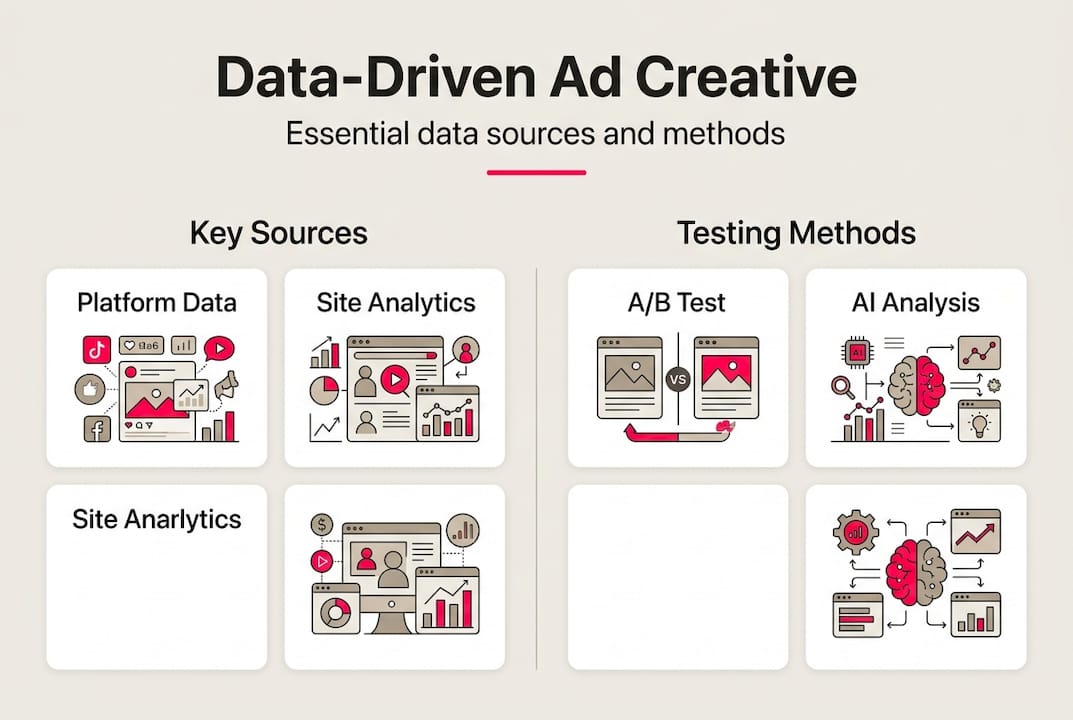

The essential data sources for optimizing creatives

Not all data is created equal. Marketers who try to act on every available metric end up paralyzed or chasing the wrong signals. The goal is to identify which sources give you the clearest picture of creative performance and focus your energy there.

| Data source | Best use case | Common pitfall |

|---|---|---|

| Ad platform analytics (Meta, Google, TikTok) | CTR, CPM, conversion tracking per creative | Vanity metrics like reach without conversion context |

| Site analytics (GA4, Shopify) | Post-click behavior, bounce rate, revenue per session | Attribution gaps between ad click and purchase |

| Social listening tools | Audience sentiment, trending language, emotional triggers | Too qualitative without a structured analysis process |

| Survey and qualitative feedback | Understanding why audiences respond or ignore | Small sample sizes skewing conclusions |

| Heatmaps and session recordings | How users interact with landing pages tied to creatives | Requires significant traffic volume to be meaningful |

The most actionable creative data comes from your ad platform analytics paired with your site analytics. Together, they tell you the full story: did the ad get clicked, and did that click lead to revenue? Everything else is supporting context.

When building high-impact ad creatives, the smartest teams prioritize split test results above all other data sources because they give you controlled, comparable answers. Social media ad examples that perform well across industries almost always share one trait: they were tested before scaling.

Here is what to prioritize when parsing multiple data streams:

- Start with conversion data, not engagement data

- Cross-reference CTR with conversion rate to catch misleading creative that clicks but does not sell

- Look for patterns across at least three to five creative variants before drawing conclusions

- Treat qualitative feedback as hypothesis fuel, not confirmation

Pro Tip: Set up a simple creative performance dashboard that pulls CTR, conversion rate, and cost per acquisition side by side for each active variant. Reviewing this weekly takes 20 minutes and prevents you from scaling a creative that looks good but does not convert.

Testing methods: A/B, multivariate, and AI-driven analysis

Knowing you need to test is one thing. Running a test that actually gives you usable answers is another. There are three main approaches, and each serves a different purpose.

A/B testing isolates one variable at a time. You run two versions of an ad where everything is identical except one element, such as the headline, the image, or the CTA. The result tells you exactly which version of that one element performs better. It is the cleanest test, but it is slow if you have many variables to evaluate.

Multivariate testing changes multiple elements simultaneously across several variants. It is faster for identifying winning combinations, but it requires significantly more traffic to reach statistical significance. Without enough impressions, your results are noise.

AI-driven creative analysis is where things get interesting. AI-powered tagging can analyze thousands of creative variants, identify patterns in what is winning, and surface insights no human analyst could catch at scale. It does not replace testing, but it dramatically accelerates the learning cycle.

Here is a simple framework for planning a creative test:

- Define your hypothesis: “Changing the headline from benefit-led to urgency-led will increase CTR by 15%.”

- Isolate the variable you are testing (for A/B) or define the combination matrix (for multivariate).

- Set your success metric before launching. CTR, conversion rate, or cost per action.

- Determine your minimum sample size. Most platforms need at least 1,000 impressions per variant for directional data.

- Run the test for a full week minimum to account for day-of-week performance variation.

- Analyze results and document findings in a shared creative log.

| Method | Best for | Pros | Cons |

|---|---|---|---|

| A/B testing | Single element decisions | Clean, clear results | Slow with many variables |

| Multivariate | Combination optimization | Faster broad learning | Needs high traffic volume |

| AI analysis | Pattern recognition at scale | Surfaces hidden insights | Requires good data inputs |

Using an ad testing tool that supports pre-launch simulation can dramatically cut wasted spend. And if you want to test ad creatives before launch, AI-driven persona simulation lets you get directional feedback without burning real budget.

Pro Tip: Never call a test early because one variant is trending ahead. Wait for statistical significance, ideally 95% confidence, before making decisions. Calling tests early is one of the most common sources of false positives in creative optimization.

Applying insights: From data to creative improvement

Data without action is just a report. The real value of creative testing comes from what you do with the results. Most teams collect data and then argue about what it means. High-performing teams build a repeatable process for translating test results into better creative.

Here is how to move from raw results to concrete creative decisions:

- Identify the winning element: Was it the image, the headline, the CTA, or the format? Be specific.

- Understand why it won: Did it speak more directly to a pain point? Did it create more urgency? Use qualitative context to explain the quantitative result.

- Apply the learning forward: Do not just swap in the winner. Extract the principle and apply it across your next batch of creative variants.

- Flag what to drop: If a creative element consistently underperforms across multiple tests, retire it. Do not keep testing the same losing hypothesis.

- Document everything: A creative insight log is one of the most underused assets in performance marketing.

Here is a real-world example. A DTC apparel brand was running two ad variants with identical visuals but different CTAs: “Shop Now” versus “Find Your Fit.” The second CTA drove a 22% higher conversion rate. The insight was not just “use this CTA.” It was that their audience responded to personalization language over generic action commands. That principle then informed every subsequent creative brief.

AI ad creative automation tools can accelerate this cycle by generating new variants based on winning patterns automatically. Paired with insights about top ad formats for your vertical, you can iterate faster without proportionally increasing workload.

Pro Tip: Always retest after making changes. Creative optimization is not a one-time project. Audience preferences shift, platform algorithms evolve, and what worked last quarter may not work this quarter. Build retesting into your regular campaign rhythm.

Why over-focusing on data can backfire—and how to balance creativity and numbers

Here is something most optimization guides will not tell you: data can make your ads boring. When every creative decision is filtered through what the numbers already validated, you end up optimizing toward the mean. You produce ads that are reliably average instead of occasionally exceptional.

We have seen this happen with teams that run tight testing programs. They get so good at iterating on winners that they stop taking creative risks. Their ads become predictable. Audiences tune them out. Creative testing balance is not just a nice idea. It is a strategic necessity.

The fix is treating data as a floor, not a ceiling. Use your test results to establish what works reliably, and then allocate a portion of your creative budget, around 20%, to experiments that have no historical precedent. These are the tests where you try a completely different format, a new emotional angle, or a creative concept that your data would never have suggested.

Some of the most memorable ad campaigns in e-commerce history came from ideas that looked like bad bets on paper. Data told the story after the fact. Creativity made the bet first. The best performance marketers know when to trust the numbers and when to push past them.

Next steps: Power up your ad creative workflow with POPJAM.IO

You now have a clear framework for moving from creative guesswork to data-driven optimization. The next step is putting it into practice without the manual overhead that slows most teams down.

POPJAM.IO is built specifically for performance marketers who want to move fast without sacrificing creative quality. Use the AI ad generator to produce platform-native creative variants in minutes, run pre-launch simulations with synthetic audience personas, and validate your hooks before spending a dollar. The ad testing tool gives you structured testing workflows built for e-commerce teams. And the creative automation platform handles the iteration cycle so your team can focus on strategy, not production. Start optimizing your ad creatives with data today.

Frequently asked questions

What are the best metrics for evaluating ad creative performance?

The top metrics include click-through rate, conversion rate, engagement, and cost per action. Each one reveals how your creative drives real business outcomes, and granular creative analysis helps you connect those metrics to specific elements like visuals or CTAs.

How often should you test new ad creatives with data?

Run new creative tests every campaign cycle and after any major strategy, audience, or market change. Continuous creative testing keeps your insights fresh and prevents performance decay from ad fatigue.

Can small e-commerce brands afford data-driven ad creative testing?

Yes. Free and affordable platforms now make creative testing accessible even for small budgets, and AI tools reduce the time and cost of generating and analyzing variants significantly.

Does focusing on data mean losing creativity in ads?

No. Used wisely, data highlights what resonates with your audience and informs bigger creative ideas without stifling originality. AI-powered tagging can actually surface creative directions you would never have considered on your own.