Ad feedback analysis: Boost campaign ROI with data

Most marketers treat ad feedback like a post-mortem. Something you review after the campaign has already burned through budget. That assumption is expensive. Skipping pre-launch feedback analysis is one of the most common reasons campaigns underperform, and the fix is simpler than most teams realize. Analyzing ad feedback validates performance, aligns creatives with audience needs, identifies optimization opportunities, and improves budget efficiency by avoiding spend on underperformers. This guide walks you through why feedback analysis matters, which methods actually work, and how to build a repeatable process that sharpens every campaign before and after it goes live.

Table of Contents

- Why ad feedback matters for campaign success

- Key methodologies: Combining quantitative and qualitative feedback

- Pre-launch feedback vs. post-launch analysis: When to analyze

- Applying ad feedback: Step-by-step optimization process

- Take your ad creative testing to the next level

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Feedback prevents overspending | Analyzing feedback before launching ads helps avoid wasting budget on underperforming creatives. |

| Combine metrics and insights | Using both quantitative and qualitative feedback leads to smarter campaign decisions and better audience targeting. |

| Pre-launch testing is critical | Early creative testing can identify issues and save up to 60% of campaign spend. |

| Optimize for profit-tied KPIs | Focusing on conversion rate, ROAS, and CPA ensures your efforts boost actual returns, not just impressions. |

| Refresh creatives based on feedback | Monitor for ad fatigue and use feedback-driven updates to keep engagement high and ROI strong. |

Why ad feedback matters for campaign success

Running ads without analyzing feedback is like driving with your eyes closed. You might get somewhere, but the odds are not in your favor. Feedback is the signal that tells you whether your creative is resonating, your targeting is accurate, and your message is landing the way you intended.

Here is what structured ad feedback analysis actually does for your campaigns:

- Validates effectiveness: Confirms whether your creative is driving the actions you want, not just impressions.

- Aligns creative with audience expectations: Surfaces mismatches between what you think your audience wants and what they actually respond to.

- Identifies optimization opportunities: Pinpoints which elements, headlines, visuals, calls to action, are dragging performance down.

- Improves budget allocation: Stops you from pouring money into underperforming variants by flagging them early.

As one performance marketing resource puts it:

“Analyzing ad feedback validates performance, aligns creatives with audience needs, identifies optimization opportunities, and improves budget efficiency by avoiding spend on underperformers.”

The practical implication is significant. When you treat feedback as a continuous input rather than a one-time review, you build a compounding advantage. Each campaign teaches you something that makes the next one sharper. Teams that skip this step are essentially starting from zero every time.

If you want a faster path to better creative decisions, exploring ad creative testing tips can help you build that feedback loop from the ground up.

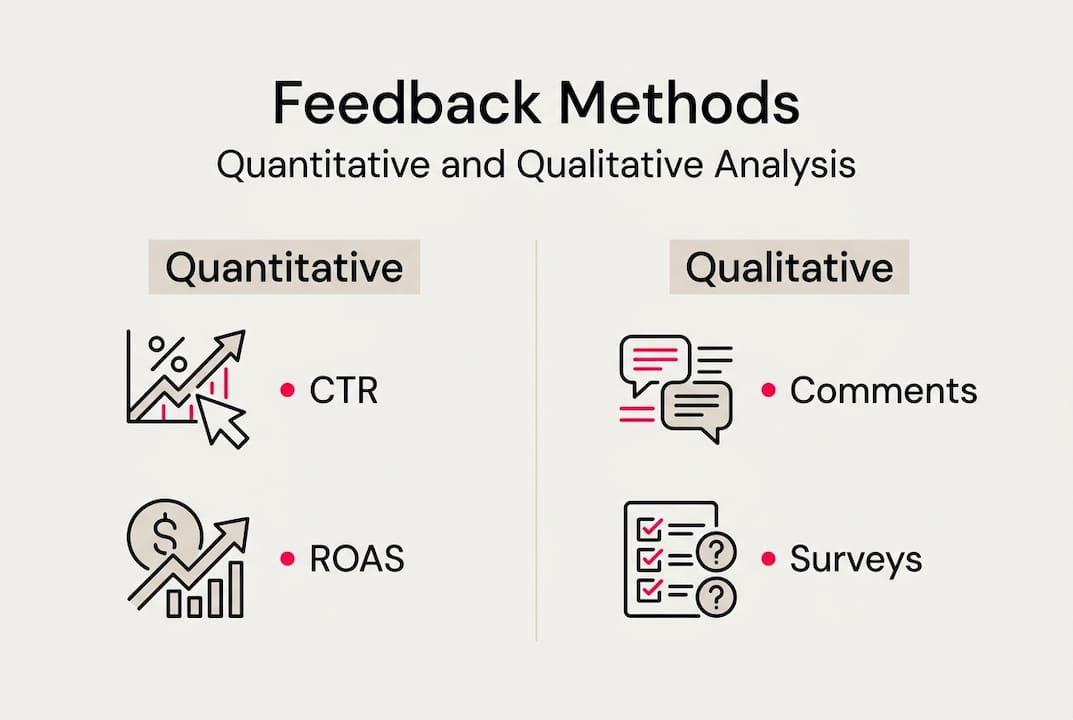

Key methodologies: Combining quantitative and qualitative feedback

Feedback analysis is not a single tool or tactic. It is a combination of methods that, when used together, give you a complete picture of what is working and why.

Quantitative metrics are your starting point. These are the numbers that tell you what happened:

| Metric | What it measures | Why it matters |

|---|---|---|

| CTR (click-through rate) | Ad engagement | Shows if your creative earns attention |

| Conversion rate | Actions taken post-click | Measures real business impact |

| ROAS (return on ad spend) | Revenue per dollar spent | Ties creative directly to profit |

| CPA (cost per acquisition) | Cost to acquire one customer | Benchmarks efficiency across variants |

Qualitative feedback fills in the gaps the numbers cannot explain. Surveys, comment analysis, and sentiment tracking reveal the emotional and contextual reasons behind the data. Someone might click your ad but not convert because the landing page tone felt off. Quantitative data shows the drop. Qualitative feedback explains it.

Key methodologies include combining quantitative metrics like CTR, conversions, ROAS, and CPA with qualitative data from surveys and comments, segmenting by demographics and channels, running A/B tests, and using AI tools for sentiment and thematic analysis.

Segmentation is where most teams leave performance on the table. Breaking feedback down by platform, age group, device type, or creative format reveals patterns that aggregate data hides. A video ad might crush it on TikTok and flatline on LinkedIn. Without segmentation, you would never know.

Pro Tip: Use AI-powered ad insights tools to run thematic analysis on comment sections and survey responses at scale. Manual review of qualitative data is slow and inconsistent. AI surfaces patterns in minutes.

Pre-launch feedback vs. post-launch analysis: When to analyze

There is a persistent myth that feedback only becomes useful once your campaign is live. In reality, the most valuable feedback window is before you spend a single dollar on media.

Pre-launch testing catches problems when they are cheap to fix. Showing creative concepts to a simulated or real audience before launch lets you identify messaging gaps, visual confusion, and tone mismatches without paying for the lesson in wasted impressions.

Post-launch analysis serves a different purpose. It tracks performance decay, spots ad fatigue, and informs refresh cycles. Pre-launch creative testing reduces risk, fixes issues cheaply, and prevents wasted budget, while post-launch analysis spots fatigue, with CTR drops signaling when a creative needs a refresh.

Here is how the two approaches compare:

| Factor | Pre-launch testing | Post-launch analysis |

|---|---|---|

| Timing | Before media spend | During and after campaign |

| Primary goal | Prevent costly mistakes | Optimize and refresh |

| Cost of action | Very low | Moderate to high |

| Key tools | Simulations, surveys, personas | Analytics dashboards, A/B tests |

| Risk level | Minimal | Higher if delayed |

The smartest teams use both. Pre-launch testing answers “will this work?” Post-launch analysis answers “how is this performing and what needs to change?”

Here is a practical sequence to follow:

- Define your KPIs before creative development begins.

- Run pre-launch simulations or concept tests with target audience proxies.

- Launch with a controlled A/B test structure.

- Monitor quantitative metrics weekly for early fatigue signals.

- Collect qualitative feedback through comment monitoring and micro-surveys.

- Refresh or iterate based on combined findings.

Pro Tip: Do not wait for CTR to collapse before refreshing creative. A drop of 0.1 to 0.2 percentage points over two weeks is an early warning sign. Use pre-launch ad testing tools to build a library of pre-validated concepts ready to deploy when fatigue hits.

Applying ad feedback: Step-by-step optimization process

Collecting feedback is only half the job. The other half is turning those insights into concrete creative decisions. Here is a repeatable workflow that performance marketers and e-commerce teams can apply immediately.

- Audit your current creative library. Identify which ads are underperforming based on ROAS and CPA benchmarks. Flag them for analysis, not just replacement.

- Pull both data types. Export quantitative metrics from your ad platform and gather qualitative signals from comments, surveys, or simulated audience feedback.

- Identify patterns, not outliers. One bad comment is noise. A recurring theme across 50 comments is a signal. Look for patterns in language, sentiment, and objections.

- Form a hypothesis. Based on your findings, make a specific prediction. For example: “Changing the headline from benefit-focused to problem-focused will increase CTR by 15%.”

- Build and test a variant. Create the new version and run it against the control with enough budget to reach statistical significance.

- Document and scale winners. When a variant wins, extract the principle behind it and apply it across your broader creative strategy.

Pre-launch testing via simulations cuts waste, with studies showing up to 60% overspend on poor creative when testing is skipped. Integrating competitor intelligence and industry patterns into your hypothesis formation sharpens your starting point significantly.

Common pitfalls to avoid:

- Chasing vanity metrics. Likes and shares feel good but rarely correlate with revenue. Anchor every decision to profit-tied KPIs.

- Testing too many variables at once. Change one element per test or you will not know what drove the result.

- Ignoring platform context. An insight from Meta does not automatically transfer to TikTok. Segment your analysis by platform.

- Skipping the documentation step. Insights that are not recorded are lost. Build a simple creative learnings log that your whole team can access.

Pro Tip: Use competitor ad data as a hypothesis generator, not a blueprint. Seeing what formats and angles competitors are running tells you what the market is responding to. Combine that with your own audience feedback using ad creative optimization steps to build campaigns that are both informed and differentiated.

Take your ad creative testing to the next level

Understanding feedback methodology is one thing. Having the infrastructure to act on it fast is another. That gap is where most teams lose time and budget.

POPJAM.io is built specifically for performance marketers and e-commerce teams who need to move from insight to creative output without the usual bottlenecks. The platform generates platform-native ad creatives across Meta, TikTok, Google, LinkedIn, and Reddit, and lets you test them against psychographic synthetic personas before a single dollar goes to media. You can simulate audience reactions, run pre-launch feedback loops, and export winning variants directly to your ad platforms. Check out the free AI marketing tools to see what pre-launch testing looks like in practice, or review creative testing pricing to find the right plan for your team’s output needs.

Frequently asked questions

What metrics should I prioritize when analyzing ad feedback?

Focus on profit-tied KPIs like conversion rate, ROAS, and CPA. Vanity metrics like impressions and reach feel meaningful but rarely connect to actual revenue outcomes.

How can pre-launch ad feedback save my campaign budget?

Pre-launch testing catches creative weaknesses before media spend begins, typically costing less than 1% of total budget. Fixing issues early is dramatically cheaper than correcting underperformance after launch.

What’s the difference between quantitative and qualitative ad feedback?

Quantitative feedback covers measurable metrics like CTR and conversions. Qualitative feedback captures sentiment, comments, and thematic patterns that explain the reasons behind those numbers.

How do I spot ad fatigue through feedback analysis?

Watch for a CTR drop of 0.1 to 0.2 percentage points over a short window. Declining engagement rates alongside rising frequency are a reliable signal that your creative needs a refresh before performance collapses.